What is qq?

qq is a wrapper around batch scheduling systems designed to simplify job submission and management. It is inspired by NCBR's Infinity ABS but aims to be more decentralized and easier to extend. It also supports both PBS Pro and Slurm, while making it straightforward to add compatibility with other batch systems as needed.

Although qq and Infinity ABS share the same philosophy and use very similar commands, they share no code.

Disclaimer: qq is developed for the internal use of the Robert Vácha Lab and may not work on clusters other than those officially supported (Robox, Sokar, Metacentrum, LUMI, Karolina).

Installation

This section explains how to easily install qq on different clusters.

All installation scripts assume that you are using bash as your shell. If you use a different shell, follow this section of the manual.

Updating qq

To reinstall or update qq on a cluster, just run the installation command for the given cluster again.

Updating qq is usually safe, even if you have running qq jobs on the cluster. Jobs that are already running will continue using the old version of qq. Loop jobs will automatically switch to the updated version in their next cycle.

Installing on Robox

To install qq on the Robox cluster (computers of the RoVa Lab), log in to your desktop and run:

curl -fsSL https://github.com/VachaLab/qq/releases/latest/download/qq-robox-install.sh | bash

This command downloads the latest version of qq, installs it to your home directory on your desktop, and then installs it to the home directory of the computing nodes.

To finish the installation, either open a new terminal or source your .bashrc file.

Note: This does not install qq on other desktops with separate local home directories.

If you want to use qq on other desktops, you'll need to install it there separately.

(Not installing it there however helps prevent accidentally running jobs on someone else's desktop.)

For more details about the Robox cluster, see Robox cluster specifics.

Installing on Sokar

To install qq on the Sokar cluster (managed by NCBR), log in to sokar.ncbr.muni.cz and run:

curl -fsSL https://github.com/VachaLab/qq/releases/latest/download/qq-sokar-install.sh | bash

This downloads the latest version of qq and installs it to the shared home directory of the cluster nodes.

To complete the installation, open a new terminal or source your .bashrc file.

For more details about the Sokar cluster, see Sokar cluster specifics.

Installing on Metacentrum

To install qq on Metacentrum, log in to any Metacentrum frontend and run:

curl -fsSL https://github.com/VachaLab/qq/releases/latest/download/qq-metacentrum-install.sh | bash

This command downloads the latest version of qq and installs it into your home directory on brno12-cerit. The script then adds qq's location on this storage to your PATH across all Metacentrum machines.

Because qq runs significantly slower when stored on non-local storage, the installation script also configures the .bashrc files of all Metacentrum machines to automatically copy qq from brno12-cerit to their local scratch space on login. This improves the responsiveness of qq operations.

To complete the installation, either open a new terminal or source your .bashrc file.

For more details about the Metacentrum clusters, see Metacentrum clusters specifics.

Installing on Karolina

To install qq on the Karolina supercomputer (IT4Innovations), log in to karolina.it4i.cz and run:

curl -fsSL https://github.com/VachaLab/qq/releases/latest/download/qq-karolina-install.sh | bash

This downloads the latest version of qq and installs it to the shared home directory of the cluster nodes.

To complete the installation, open a new terminal or source your .bashrc file.

For more details about Karolina, see Karolina specifics.

Installing on LUMI

To install qq on the LUMI supercomputer, log in to lumi.csc.fi and run:

curl -fsSL https://github.com/VachaLab/qq/releases/latest/download/qq-lumi-install.sh | bash

This downloads the latest version of qq and installs it to the shared home directory of the cluster nodes.

To complete the installation, open a new terminal or source your .bashrc file.

For more details about LUMI, see LUMI specifics.

Installing manually

Installing a pre-built version

To install a pre-built version of qq on a single computer or on several computers sharing the same home directory, run:

curl -fsSL https://github.com/VachaLab/qq/releases/latest/download/qq-install.sh | \

bash -s -- $HOME https://github.com/VachaLab/qq/releases/latest/download/qq-release.tar.gz

To finish the installation, either open a new terminal or source your .bashrc file.

Installing a pre-built version for other shells

If you're not using bash, you'll need to modify the qq-install.sh script.

First, download it:

curl -OL https://github.com/VachaLab/qq/releases/latest/download/qq-install.sh

Then edit this line to match your shell's RC file:

BASHRC="${TARGET_HOME}/.bashrc"

# For example, if you use zsh:

BASHRC="${TARGET_HOME}/.zshrc"

Next, make the script executable and run it:

chmod u+x qq-install.sh

./qq-install.sh $HOME https://github.com/VachaLab/qq/releases/latest/download/qq-release.tar.gz

Building qq from source

To build and install qq yourself, you'll need git and uv installed.

First, clone the qq repository:

git clone git@github.com:VachaLab/qq.git

Then navigate to the project directory and install the dependencies:

cd qq

uv sync --all-groups

Build the package using PyInstaller:

uv run pyinstaller qq.spec

PyInstaller will create a directory named qq inside dist. Copy that directory wherever you want and add it to your PATH.

If you want the qq cd command to work, add the following shell function to your shell's RC file:

qq() {

if [[ "$1" == "cd" ]]; then

for arg in "$@"; do

if [[ "$arg" == "--help" || "$arg" == "-h" ]]; then

command qq "$@"

return

fi

done

target_dir="$(command qq cd "${@:2}")"

cd "$target_dir" || return

else

command qq "$@"

fi

}

If you want the autocomplete for the qq commands to work, add the following line to your shell's RC file:

eval "$(_QQ_COMPLETE=bash_source qq)"

To finish the installation, either open a new terminal or source your .bashrc file.

Running a job

This section demonstrates how to run a basic qq job by performing a simple Gromacs simulation on the robox cluster. This assumes that you have already successfully installed qq on the cluster.

1. Preparing an input directory

Start by creating a directory for the job on a shared storage (you can also submit from a local storage on your computer but that is not recommended). This directory should contain all necessary simulation input files — in this example, an mdp, gro, cpt, ndx,top, and itp files.

2. Preparing a run script

Next, prepare a run script that creates a tpr file from the input files using gmx grompp, and then runs the simulation with gmx mdrun. We’ll configure the simulation to use 8 OpenMP threads.

Note that all qq run scripts must start with the correct shebang line:

#!/usr/bin/env -S qq run

A complete example of a run script:

#!/usr/bin/env -S qq run

# activate the Gromacs module

metamodule add gromacs/2024.3-cuda

# prepare a TPR file

gmx_mpi grompp -f md.mdp -c eq.gro -t eq.cpt -n index.ndx -p system.top -o md.tpr

# run the simulation using 8 OpenMP threads

gmx_mpi mdrun -deffnm md -ntomp 8 -v

Hint: You can use the

qq shebangcommand to easily add the qq run shebang to your script.

Save this file as run_job.sh and make it executable:

chmod u+x run_job.sh

3. Submitting the job

Submit the job using qq submit:

qq submit run_job.sh -q cpu --ncpus 8 --walltime 1d

This submits run_job.sh to the cpu queue, requesting 8 CPU cores and a walltime of one day. All other parameters are determined by the queue or qq’s default settings.

Note that on Karolina and LUMI, you also have to specify the

--accountoption, providing the ID of the project you are associated with.

The batch system then schedules the job for execution. Once a suitable compute node is available, the job runs through qq run, a wrapper around bash that prepares the working directory, copies files, executes the script, and performs cleanup. You can read more about how exactly this works in this section of the manual.

4. Inspecting the job

After submission, you can inspect the job using qq info, access its working directory on the compute node with qq go, or terminate it using qq kill. For an overview of all qq commands, see this section of the manual.

5. Getting the results

Once the job finishes, the resulting Gromacs output files will be transferred from the working directory back to the original input directory. You can verify that everything completed successfully using qq info.

If your job failed (crashed) or was killed, only the qq runtime files are by default transferred to the input directory to ensure it remains in a consistent state. In these cases, the working directory on the compute node is preserved, allowing you to inspect the job files directly using qq go or to copy them back to the input directory using qq sync. On some systems, you may also want to explicitly delete the working directory afterward — to do this, use qq wipe. If you want to try running the failed/killed job again with the same parameters, respawn it using qq respawn.

Run scripts

For more complex setups — particularly for running Gromacs simulations in loops — qq provides several ready-to-use run scripts. These scripts are fully compatible with all qq-supported clusters, including Metacentrum-family clusters, Karolina, and LUMI.

Job types

qq currently supports three job types: standard, loop, continuous.

standard jobs are the default type. Any job for which you don't specify a job-type when submitting is considered standard. Read more about standard jobs here.

loop jobs automatically submit their continuation before finishing. They also track their cycle and archive output files. Read more about them here.

continuous jobs are "poor man's loop jobs". Similarly to loop jobs, they automatically submit their continuation before finishing, but they do not track their cycle and do not perform any archiving operations. Read more about them here.

Standard jobs

A standard job is the default qq job type. This section describes the full lifecycle of a standard qq job.

1. Submitting the job

Submitting a qq job is done using qq submit.

qq submit submits the job to the batch system and generates a qq info file containing metadata and details about the job. This info file is named after the submitted script, has the .qqinfo extension, and is located in the input directory (often also called the submission or, somewhat confusingly, job directory).

Once submitted, the batch system takes over, finding a suitable place and time to execute your job. As a user, you don't need to do anything else except wait for the job to run.

2. Preparing the working directory

When the batch system allocates a machine for your job, the qq run environment takes over. It first prepares a working directory for the job on the execution node.

If you requested the job to run in the input directory (by submitting with --workdir=input_dir or the equivalent --workdir=job_dir), the input directory is used directly as the working directory, and no additional setup is required.

If you requested the job to run on scratch (the default option for all environments), a working directory is created inside your allocated scratch space, and all files and directories in the input directory are copied there — except for the qq runtime files (.qqinfo and .qqout) and the "archive" directory if you are running a loop job (discussed later). During submission, you can also specify additional files you explicitly do not want to copy to the working directory.

Once the working directory is ready, qq updates the info file to mark the job state as running. Only then is your submitted script executed.

In all environments supported by qq, the working directory is placed on scratch storage by default. This is typically not only faster but also safer — qq generally recommends keeping the job execution environment separate from the input directory until the job finishes successfully. This ensures that, if something goes wrong, your original input data remain untouched — no matter what your executed script did. However, all qq-supported environments also allow you to use

--workdir=input_dirif you prefer to run the job directly in the input directory.

3. Executing the script

After preparing the working directory, the submitted script is executed using bash by default. You can also specify a different interpreter, if you wish, such as Python.

The script should exit with code 0 if everything ran successfully, or a non-zero code to indicate an error. The exit code is passed back to qq, which sets the appropriate job state (finished for 0, failed for anything else).

Standard output from your script is saved to a file named after your script with the .out extension. Standard error output is stored in a similar file with the .err extension.

4. Finalizing execution

After the script finishes, qq performs cleanup.

If your job ran in the input directory, cleanup is simple: qq updates the job's state (finished or failed) in the qq info file, and the execution ends.

If your job ran on scratch, cleanup depends on the script's exit code.

By default, if the script finished successfully (exit code 0), all files from the working directory are copied back to the input directory, and the working directory is deleted. Finally, qq sets the job state to finished.

If the job failed (exit code other than 0), the working directory is left intact on the execution machine for inspection (you can open it using qq go, download it using qq sync, or delete it using qq wipe). Only the qq runtime files with file extensions .err and .out are copied to the input directory so you can easily check what exactly went wrong during the execution. Finally, the job state is set to failed.

Regardless of the result, qq creates an output file (named after your script with the .qqout extension) in the input directory. This file contains basic information about what qq did and when the job finished. Depending on the batch system, this file may appear either after job completion (PBS) or immediately after the job starts being executed (Slurm).

The decision not to copy data from failed runs back to the input directory is a deliberate part of qq's design philosophy. It prevents temporary or partially written files from polluting the input directory and ensures you can rerun the job cleanly after fixing the issue. In some cases, your script may even modify input files during execution and copying them back after a failure would overwrite data necessary for rerun. If you need anything from a failed run, you can copy selected files — or the entire working directory — using

qq sync.You can however change this default behavior by providing a different transfer mode when submitting the job.

Killing a qq job

If your job is killed (either manually via qq kill or automatically by the batch system, for example if it exceeds walltime), all files remain in the working directory on the execution machine and only qq runtime files are copied to the input directory. qq then stops the running script and marks the job state as killed.

Submitting the next job

After a job has completed successfully, you may want to submit a new one: for example, to proceed to the next stage of your workflow. If you try to submit another qq job from the same directory as the previous one, you will however encounter an error:

ERROR Detected qq runtime files in the submission directory. Submission aborted.

This behavior is intentional. qq enforces a one-job-per-directory policy to promote reproducibility and maintain organized workflows. Each job should reside in its own dedicated directory. (You can always override this policy by using qq clear --force but that is not recommended.)

If your previous job crashed or was terminated and you wish to rerun it, you can remove the existing qq runtime files using qq clear.

Even analysis jobs that operate on results from earlier runs are recommended to be submitted from their own directories. Although qq copies only the files and directories located in the job’s input directory by default, you can explicitly include additional files or directories using the

--includeoption ofqq submit. These included items are copied to the working directory for the duration of the job, but they are not copied back after job completion. This allows you to maintain a clean one-job-per-directory workflow while still accessing any extra data your analysis requires.Example directory structure:

simulation/1_run→ directory for the simulation job

simulation/2_analysis→ directory for the analysis job, submitted with--include ../1_run

Additional notes

- Most operations during working directory setup and cleanup are automatically retried in case of errors. This helps prevent job crashes caused by temporary storage or network issues. If an operation fails, qq waits a few minutes and retries — up to three attempts. After three failures, qq stops and reports an error. Note that qq does not retry execution of your script itself.

- If your job fails with an exit code between 90–99, this usually means a qq operation failed. Check the qq output file (

.qqout) for more details. An exit code of 99 indicates a critical or unexpected error, which usually means a bug in qq. Please report such cases.

Loop jobs

Loop jobs are jobs that automatically submit their continuation at the end of execution while tracking the current cycle and archiving output files. This section describes how they differ from standard jobs. Please read the section about standard jobs first — otherwise, this may be difficult to follow.

To turn a job into a loop job, you must set two qq submit options:

job-typetoloop, andloop-endto specify the last cycle of the loop job.

Do loop jobs seem unnecessarily complex for your use-case? Do you just want a job that submits its own continuation without worrying about archival and cycle tracking? Take a look at continuous jobs—they might be what you need.

Loop job cycles

Each loop job consists of multiple cycles. Every cycle is a separate job from the batch system's perspective. Before a cycle finishes, it submits the next one and then ends. The next cycle continues where the previous one left off.

You can control the starting cycle using the loop-start submission option (defaults to 1). To set the final cycle, use the loop-end option. The cycle specified as loop-end will be the last one executed.

Archive directory

Each loop job creates an archive directory inside the input directory. This directory is not copied to the job's working directory, so it can safely hold large amounts of data. In loop jobs, the archive serves two main purposes:

- to identify and initialize the current cycle of the loop job,

- to store data from previous cycles without copying them to the working directory.

You can control the archive directory's name using the archive submission option (default: storage).

Archived files should follow a specific filename format that includes the job cycle number they belong to. You can define this format using the archive-format submission option (default: job%04d). In this format, %04d is replaced by the cycle number — for example, job0001 for cycle 1, job0002 for cycle 2, job0143 for cycle 143 and so on.

When a new cycle is submitted (either manually or automatically by the previous one), qq sets the current cycle number based on the highest cycle number found in the names of the archived files. In other words: in each cycle of a loop job, at least one file must be added to the archive whose name includes the number of the next cycle. Otherwise, the job submission will fail with an error.

(If no archive directory or archived files exist, the cycle number defaults to loop-start.)

Working with the archive

You typically should not transfer files from and to the archive directly inside your submitted script. If you follow the proper naming etiquette, the qq run environment will handle all archiving operations for you.

At the start of each cycle, after copying files from the input directory to the working directory, qq run checks the archive and automatically copies all files associated with the current cycle into the working directory. For example, if the current cycle number is 8 and archive-format is job%04d, any file in the archive containing job0008 in its name will be automatically copied to the working directory. These files can then be used to initialize the next cycle of the job.

After the submitted script finishes successfully, qq moves all files matching the archive-format (for any cycle) to the archive directory. For example, if the 8th cycle produces the files job0008.txt, job0008.dat, and job0009.init, and the archive format is job%04d, all three files will be moved to the archive. Only after these files are archived are the remaining files in the working directory moved to the input directory. This ensures that archived files don't clutter the input directory or get copied to the next cycle's working directory.

In summary, unlike with Infinity, you do not need to explicitly fetch files from and to the archive, you just need to name them accordingly and qq will archive them automatically.

If the script fails or the job is killed, no archival is performed. As with standard jobs, all files remain in the working directory and only qq runtime files are copied to the input directory. Note that this behavior can be changed by providing a non-default archival mode.

Be aware that if your input directory contains a file whose name matches the archive format, it will be copied to the storage and either just sit there uselessly or potentially overwrite something important. Make sure that files you do not want placed into the archive are named differently than the files for archival.

Resubmitting

After the current cycle finishes the execution of the submitted script, archives the relevant files, and copies the other files to the input directory, qq resubmits the job. This means that the next cycle is submitted from the original input directory. By default, the resubmission occurs from either the original input machine or the current main execution node, depending on the batch system. You can customize this behavior using the --resubmit-from option of qq submit.

The new job (the next cycle) waits for the previous one to finish completely before starting. When it begins, even before creating its working directory, qq archives runtime files from the previous cycle, renaming them according to the specified archive-format.

If the current cycle of the loop job corresponds to loop-end, no resubmission is performed.

Extending a loop job

Sometimes, after a job completes N cycles, you may realize you need M more. To extend the job, simply submit it again from the same input directory with loop-end set to N + M, either on the command line or in the submission script.

Importantly: you do not need to delete any runtime files from the previous cycle — and you probably shouldn't. qq submit can detect that you are extending an existing loop job and will handle the continuation correctly. This has the added benefit that the runtime files from the Nth cycle will be properly archived.

Forcing qq not to resubmit

You can manually force qq not to submit the next cycle of a loop job, even if the current cycle number has not yet reached loop-end, by returning the value of the environment variable QQ_NO_RESUBMIT from within the script:

#!/usr/bin/env -S qq run

# qq job-type loop

# qq loop-end 100

# qq archive storage

# qq archive-format md%04d

...

# if a specific condition is met, do not resubmit but finish successfully

if [ -n "${SOME_CONDITION}" ]; then

exit "${QQ_NO_RESUBMIT}"

fi

exit 0

If qq detects this exit code, it will not submit the next cycle of the loop job. The current cycle will still be marked as successfully finished (exit code 0).

Continuous jobs

Continuous jobs are jobs that automatically submit their continuation at the end of execution, but unlike loop jobs, do not track their current cycle and do not perform archiving or any other advanced operations.

They simply continue running and submitting their continuations until resubmission is explicitly stopped by returning the value of the environment variable QQ_NO_RESUBMIT from the executed script, or until the job is manually killed or fails.

To submit a continuous job, run:

qq submit (...) --job-type continuous

Submitting and running a continuous job

When submitting a continuous job, the sequence of operations is initially the same as for a standard job. The job gets queued by the batch system, then starts, creates a working directory, copies the data from the input directory there, and executes the submitted script.

Once the script successfully finishes, the data are transferred back from the working directory to the input directory. Then the job is automatically resubmitted using the same submission options. The new job has exactly the same name as the previous job, meaning that runtime files for the next job overwrite those created for the previous job. Once the next job finishes, it also automatically submits its continuation, and this continues indefinitely until the job fails or is killed. Failed and killed jobs are not resubmitted.

Continuous jobs do not perform any archiving operations and overwrite their runtime files. If you want to or need to keep runtime files for all your finished jobs, use a loop job instead.

Forcing a continuous job not to resubmit

An infinitely running job may be in some cases useful, but it is typically more useful to be able to stop a job from submitting its own continuation when some specific condition is reached.

To do this, you can use the same mechanism as for loop jobs—namely, by returning the value of the environment variable QQ_NO_RESUBMIT from within the executed script:

#!/usr/bin/env -S qq run

# qq job-type continuous

...

# if a specific condition is met, do not resubmit but finish successfully

if [ -n "${SOME_CONDITION}" ]; then

exit "${QQ_NO_RESUBMIT}"

fi

exit 0

If qq detects this exit code (${QQ_NO_RESUBMIT}), it will not submit the continuation of the continuous job. The current run will still be marked as successfully finished (exit code 0).

Extending a continuous job

In some cases, you may want to prolong a continuous job that has successfully finished (and that has been forced not to submit its own continuation). As with loop jobs, you do not need to delete the runtime files. You can simply run qq submit again—qq will recognize that a continuous job has been running in the directory and will allow submitting the job. The extended job will continue running and submitting its continuation until its script returns the environment variable QQ_NO_RESUBMIT or until the job fails or is killed.

Using continuous jobs is not recommended for long-running simulations that generate large amounts of data.

When using local scratch as your working directory (the default), qq copies all files from the job's input directory to the working directory. If you do not archive your generated data (such as MD trajectories), everything your simulation has generated will be copied to the working directory in each job cycle, which can consume significant time and disk space (you may easily exceed the default storage quota allocated for your working directory on scratch).

If possible, use loop jobs instead, as they support data archiving. For Gromacs simulations, qq provides run scripts for running long simulations.

Specifying resources

Each qq job requires some resources to run. These resources need to be requested at job submission time — the batch system uses this information to find suitable compute nodes and to ensure that jobs do not interfere with each other. Requesting too little may cause your job to fail or get killed; requesting too much may result in longer queue waiting times.

You can specify resources on the command line when running qq submit, or inside the submitted script itself using qq directives. If a resource is not specified, its value falls back to the queue default, then the server default, and finally the qq-level default for the given environment — in that order of priority.

In this section of the manual, every time you see an option, something like

--nnodes,--ncpus, or--walltime, these options relate to theqq submitcommand.

Number of nodes

Use --nnodes to specify the number of compute nodes to allocate for the job.

Most jobs only need a single node, and this is typically the default. You only need to request multiple nodes if your job uses a parallelization framework that supports multi-node execution, such as MPI.

Number of CPU cores

Each compute node has a fixed number of CPU cores. You can typically request a subset of them for your job. You can specify the number of CPU cores either per node or in total:

--ncpus-per-node— specifies the number of CPU cores per requested compute node.--ncpus— specifies the total number of CPU cores for the entire job. Overrides--ncpus-per-node.

For example, if you request 2 nodes and 16 CPU cores per node, your job will have 32 CPU cores in total. You can express this either as --nnodes 2 --ncpus-per-node 16 or --nnodes 2 --ncpus 32.

Amount of memory (RAM)

Each compute node has a fixed amount of RAM. You can specify how much memory your job needs either per CPU core, per node, or in total:

--mem-per-cpu— specifies the amount of memory per requested CPU core.--mem-per-node— specifies the amount of memory per requested compute node. Overrides--mem-per-cpu.--mem— specifies the total amount of memory for the entire job. Overrides both--mem-per-cpuand--mem-per-node.

Memory sizes are specified as N<unit> where unit is one of b, kb, mb, gb, tb, pb (e.g., 500mb, 32gb).

Number of GPUs

Some compute nodes are equipped with GPUs, which can dramatically speed up certain types of computations — particularly in machine learning, molecular dynamics, and other highly parallelizable workloads. You can specify the number of GPUs either per node or in total:

--ngpus-per-node— specifies the number of GPUs per requested compute node.--ngpus— specifies the total number of GPUs for the entire job. Overrides--ngpus-per-node.

Walltime

Use --walltime to specify the maximum runtime allowed for the job.

Once this time limit is reached, the batch system kills the job regardless of whether it has finished. Examples of valid values: 1d, 12h, 10m, 24:00:00.

Working directory

A working directory is the directory where a qq job is actually executed. qq copies the data from the input directory to the working directory, executes the submitted script there, and then copies the data back.

Typically, the working directory resides on a compute node’s local storage, but it can also be on a shared filesystem — or even be the same as the input directory.

How the working directory is created depends on the batch system and the specific environment.

Robox, Sokar, and Metacentrum clusters

On Robox, Sokar, and all Metacentrum clusters (collectively known as "clusters of the Metacentrum family"), the working directory is, by default, created on the local scratch storage of the main compute node assigned to the job. You can, however, explicitly choose to use SSD scratch, shared scratch, in-memory scratch (if available), or even use the input directory itself as the working directory.

To control where the working directory is created, use the work-dir option (or the equivalent spelling workdir) of the qq submit command:

--work-dir scratch_local– Default option on Metacentrum-family clusters. Creates the working directory on an appropriate local scratch storage. Depending on the setup, it may also be created on SSD scratch.--work-dir scratch_ssd– Creates the working directory on SSD-based scratch storage.--work-dir scratch_shared– Creates the working directory on shared scratch storage accessible by multiple nodes.--work-dir scratch_shm– Creates the working directory in RAM (in-memory scratch). Useful for jobs requiring extremely fast I/O. Note that if your job fails, your data are immediately lost.--work-dir input_dir– Uses the input directory itself as the working directory. Files are not copied anywhere. Can be slower for I/O-heavy jobs.--work-dir job_dir– Same asinput_dir.

Not all scratch types are available on every compute node. Use

qq nodesto see which storage options are supported by each node.

For more details on scratch storage types available on Metacentrum-family clusters, visit the official documentation.

Specifying the working directory size

Local, SSD, and shared scratch

By default, qq allocates 1 GB of storage per CPU core when using a scratch directory. If you need a different amount of storage, you can adjust it using the following qq submit options:

--work-size-per-cpu(or--worksize-per-cpu) — specifies the amount of storage per requested CPU core.--work-size-per-node(or--worksize-per-node) — specifies the amount of storage per requested compute node.--work-size(or--worksize) — specifies the total amount of storage for the entire job.

--work-size-per-nodeoverrides--work-size-per-cpu.--work-sizeoverrides both--work-size-per-cpuand--work-size-per-node.

Example:

qq submit --work-size 16gb (...)

# or

qq submit --work-size-per-cpu 2gb (...)

Storage sizes are specified as

N<unit>where unit is one ofb,kb,mb,gb,tb,pb(e.g.,500mb,32gb).

In-memory scratch

If you use --work-dir scratch_shm, you should allocate memory instead of work-size, using the mem, mem-per-node, or mem-per-cpu options. Make sure the total allocated memory covers both your program’s memory usage and your in-memory storage needs. By default, qq allocates 1 GB of RAM per CPU core for all jobs.

--mem-per-nodeoverrides--mem-per-cpu.--memoverrides both--mem-per-cpuand--mem-per-node.

Example:

qq submit --mem 32gb (...)

# or

qq submit --mem-per-cpu 4gb (...)

Not requesting scratch

If you use --work-dir input_dir (or --work-dir job_dir), the available storage is limited by your shared filesystem quota.

Karolina supercomputer

On the Karolina supercomputer, the working directory is, by default, created inside your project directory on the shared scratch storage. You can, however, also choose to use the input directory itself as the working directory.

To control where the working directory is created, use the work-dir option (or the equivalent spelling workdir) of the qq submit command:

--work-dir scratch– Default option on Karolina. Creates the working directory on the shared scratch storage.--work-dir input_dir– Uses the input directory itself as the working directory. Files are not copied anywhere. If you use this option, it is strongly recommended to submit from the scratch storage.--work-dir job_dir– Same asinput_dir.

Recommendation:

- Submit jobs from your Project storage (

/mnt/...). With the default--work-diroption, qq automatically copies your data to scratch, executes the job there, and then copies the results back to your input directory.- The size of the working directory on Karolina is limited by your filesystem quota, so you do not need to specify the

work-sizeoption.

LUMI supercomputer

On the LUMI supercomputer, the working directory is, by default, created inside your project directory on the shared scratch storage. You can, however, also choose to create the working directory on the flash storage or use the input directory itself as the working directory.

To control where the working directory is created, use the work-dir option (or the equivalent spelling workdir) of the qq submit command:

--work-dir scratch– Default option on LUMI (purely for consistency with the behavior of qq in other environments). Creates the working directory on the shared scratch storage.--work-dir flash– Creates the working directory on the shared flash storage. This storage can be faster for I/O-heavy jobs. Note that on LUMI, you are billed for the amount of storage you use and flash storage is much more expensive than scratch storage!--work-dir input_dir– Recommended option. Uses the input directory itself as the working directory. Files are not copied anywhere. If you use this option, you should submit the job from the scratch or flash storage.--work-dir job_dir– Same asinput_dir.

Recommendations:

- Submit jobs from your project's scratch (

/scratch/<project_id>) and using the option--workdir input_dir!- On LUMI, you are billed for the amount of storage you use! Try to avoid storing large amounts of data in the project's storages.

For more details on storage types available on LUMI, visit the official documentation.

Node properties

Some clusters have compute nodes with special hardware or software configurations, identified by node properties. Use --props to specify which properties are required or prohibited for your job. The value is a colon-, comma-, or space-separated list of property expressions.

A property can be a simple boolean flag (a node either has it or it doesn't), or it can carry a specific value, in which case it is expressed as property=value. To prohibit a property or a property value, prefix it with ^. For example:

--props cl_two— the job will only run on nodes that have thecl_twoproperty.--props ^cl_two— the job will only run on nodes that do not have thecl_twoproperty.--props cl_two,singularity— the job will only run on nodes that have both thecl_twoandsingularityproperties.--props gpu_cap=sm_120— the job will only run on nodes equipped with GPUs with compute capability 12.0 (Blackwell).--props gpu_cap=^sm_120— the job will not run on nodes equipped with GPUs with compute capability 12.0 (Blackwell).

You can use qq nodes to browse the available nodes and their properties.

Note that prohibiting property values is only supported for the PBS batch system (on Robox, Sokar, Metacentrum).

vnode property

On Robox, Sokar, and Metacentrum, each compute node has a special vnode property identifying that specific node. The value of the vnode attribute corresponds to the name of the node. You can use this attribute to force your job to run on a particular node (--props vnode=zeroc1) or to prevent it from running there (--props vnode=^zeroc1).

Cluster specifics and recommendations

In this section, we describe behavior that is specific to the individual qq-supported clusters and provide recommendations on how to submit and manage jobs on these clusters.

Robox, Sokar, and Metacentrum clusters

-

Use shared storages (e.g.,

brno14-ceitec,brno12-cerit) for storing your simulation data and submitting jobs. -

With default options, qq automatically allocates storage on the compute node(s), executes your job there, and transfers the data back. qq takes care of these copying operations.

-

If you want to know more about configuring the storage qq uses for your job, read this section of the manual.

-

When writing scripts to be executed, you can assume that all files in the script's parent directory will be accessible using relative paths. You can also use absolute paths to access files on shared storages. However, you cannot easily access the local storage on your desktop.

-

From your Robox desktop, you can submit jobs to all Metacentrum-family clusters (see Inter-cluster job management).

-

Metacentrum-family clusters are very heterogeneous — you can use pretty much any number of CPUs and GPUs you need, as long as they fit on a single node. Running multi-node jobs can be complicated, as most clusters do not have fast interconnections between individual compute nodes.

-

When submitting jobs to Metacentrum, submit with

--props ^cl_samsonto avoid using thesamsonnode, which does not support qq. -

On Metacentrum, some nodes or node groups may be slow or unstable. You can filter them out by submitting with

--props ^cl_<cluster_name>to exclude a cluster, or with--props vnode=^<node_name>to exclude a specific node. -

On Robox, you should generally not submit to the

defaultqueue as it only contains desktops. By default, qq is not installed on other people's desktops, so your jobs will most likely crash. Instead, use thecpuorgpuqueues. If you want to submit to your own desktop, you can use thedefaultqueue but must explicitly select your desktop (using--props vnode=YOUR_DESKTOP_NAME).

Click here for detailed external documentation of the Metacentrum family clusters.

Karolina supercomputer

-

Karolina has three different storages:

- Home storage: small capacity

- Project storage: large capacity, slow, persistent

- Scratch storage: large capacity, fast, regularly cleared

-

All storages are shared across all nodes of the supercomputer.

-

It is recommended to store simulation data in the Project storage and submit jobs from there. Data on the Project storage are not deleted until the project finishes, and this storage has a large capacity (unlike Home storage).

-

Prefer to not submit jobs from the Scratch storage, as its content is regularly deleted and you can lose your data.

-

With default options, when submitting from the Project storage, qq automatically creates a directory on Scratch storage, executes your job there, and transfers the data back. qq takes care of these copying operations.

-

If you want to know more about configuring the storage qq uses for your job, read this section of the manual.

-

Submit jobs with the

--accountoption providing your project ID. You can find your project ID by runningit4ifree(left-most column, in the formatOPEN-12-34). -

When submitting CPU-only jobs (queues starting with

qcpu), you always need to allocate a full compute node. Each CPU node has 128 CPU cores. If you do not specify the number of CPUs, qq will use the correct value automatically. -

For most Gromacs simulations, it is recommended to simulate multiple systems as part of a single node-wide job. You can use the

qq_loop_reor theqq_flex_rerun scripts for that. -

When submitting CPU+GPU jobs (queues starting with

qgpu), you can allocate as little as 1/8 of a compute node, which corresponds to 1 GPU and 16 CPU cores. -

Karolina's compute nodes have fast interconnections, making it easy to efficiently run jobs across multiple nodes.

Click here for detailed external documentation of the Karolina supercomputer.

LUMI supercomputer

-

LUMI has four different storages:

- User home (

/users/<username>) — small capacity - Project space (

/project/<project_id>) — small capacity, slow - Project scratch (

/scratch/<project_id>) — large capacity, fast - Project flash (

/flash/<project_id>) — medium capacity, super fast

- User home (

-

All storages are shared across all nodes of the supercomputer.

-

All storages are persistent for the duration of the project, i.e., data are not deleted from any of them until the project completes. However, you are billed for using the storages, so try to keep the amount of stored data low.

-

It is recommended to store simulation data on the scratch space and submit jobs from there. When doing so, submit with the

--workdir input_diroption so that your data are not needlessly copied around. -

If you want to know more about configuring the storage qq uses for your job, read this section of the manual.

-

Submit jobs with the

--accountoption providing your project ID. You can find your project ID by runninglumi-allocations(project ID should look like this:project_123456). -

When submitting to the

standard-g(CPU+GPU) orstandard(CPU-only) queues, you need to allocate full nodes. On each node, you can request 56 (standard-g) or 128 (standard) CPU cores. -

For most Gromacs simulations, it is recommended to simulate multiple systems as part of a single node-wide job. You can use the

qq_loop_reor theqq_flex_rerun scripts for that. -

When submitting to the

small-g(CPU+GPU) orsmall(CPU-only) queues, you can allocate a smaller amount of resources. -

The strongly recommended ratio of GPUs to CPU cores on all GPU queues is 1:7 (see here for more details).

-

Each LUMI CPU core can run two threads. This means that when you request N CPU cores (e.g., 7),

qq jobswill report your job as using 2N cores (e.g., 14) once it starts running. This is expected behavior. -

When running Gromacs, you can choose between using N or 2N OpenMP threads per node. Depending on your setup, one may perform better than the other. By default, qq run scripts for Gromacs use N OpenMP threads. To use 2N threads instead, replace the following lines in the scripts

export OMP_NUM_THREADS="${NTOMP}" (...) ${PLUMED} -ntomp ${NTOMP} ${APPEND} -nb ${NB} -pin on -maxh ${MAX_TIME}with

export OMP_NUM_THREADS="$((NTOMP * 2))" (...) ${PLUMED} -ntomp $((NTOMP * 2)) ${APPEND} -nb ${NB} -pin on -maxh ${MAX_TIME} -

LUMI's compute nodes have fast interconnections, making it easy to efficiently run jobs across multiple nodes.

Click here for detailed external documentation of the LUMI supercomputer.

Commands

qq provides a range of commands for submitting, executing, monitoring, and managing your jobs, as well as for displaying information about available compute nodes and submission queues. This section describes how to use each of them.

Each command is run in the terminal using the following syntax:

qq [COMMAND] [ARGS] [OPTIONS]

For example:

qq info 123456 -s

prints a short summary of the job with ID 123456.

To see a list of all available qq commands, simply type:

qq

For detailed information about a specific command, use:

qq [COMMAND] --help

qq cd

The qq cd command is used to navigate to the input directory of a job. It is qq's equivalent of Infinity's pgo when used with a job ID.

Quick comparison with pgo

- Unlike

pgo,qq cddoes not have a dual function.

pgocan either open a new shell on the job's main node or navigate to the job's input directory depending on the arguments provided.qq cd, on the other hand, always navigates to the input directory of the specified job in the current shell. It never opens a new shell.- If you want to open a shell in the job's working directory instead, use

qq go.

Description

Changes the current working directory to the input directory of the specified job.

qq cd [OPTIONS] JOB_ID

JOB_ID — Identifier of the job whose input directory should be entered.

Examples

qq cd 123456

Changes the current shell's working directory to the input directory of the job with ID 123456 located on the default batch server. If the job is located on a different batch server, you need to use the full ID including the server address.

Notes

- Works with any job type, including those not submitted using

qq submit.

qq clear

The qq clear command is used to remove qq runtime files from the current or specified directory. It is qq's equivalent of Infinity's premovertf.

Quick comparison with premovertf

qq clearchecks whether the qq runtime files belong to an active or successfully completed qq job.

- If they do, the files are not deleted (if you really want to delete them, you have to use the

--forceflag).- If they do not, the files are deleted without asking for confirmation.

- In contrast,

premovertfsimply lists the files and always asks for confirmation before deleting them (unless run aspremovertf -f).qq clearcan operate on a specific directory using the-d/--diroption.

Description

Deletes qq runtime files from the current or specified directory.

qq clear [OPTIONS]

Options

-d,--dir— Specify the directory to clear qq runtime files from.--force— Force deletion of all qq runtime files, even if they belong to active or successfully completed jobs.

Examples

qq clear

Deletes all qq runtime files (files with extensions .out, .err, .qqinfo, .qqout) from the current directory, provided these files are not associated with any job or belong to a job that has been killed or has failed. If multiple jobs are represented in the directory, only files related to killed or failed jobs are deleted. This helps prevent accidental removal of files from running or successfully finished jobs.

qq clear -d gromacs/popc/job1

Deletes all suitable qq runtime files from directory corresponding to the relative path gromacs/popc/job1.

qq clear --force

Deletes all qq runtime files from the current directory, regardless of their job state. In other words, all files with extensions .out, .err, .qqinfo, and .qqout will be removed. This is dangerous — only use the --force flag if you are absolutely sure you know what you are doing!

Notes

- You should not delete the

.qqinfofile of a running job, as this will cause the job to fail!

qq go

The qq go command is used to navigate to the working directory of a job. It is qq's equivalent of Infinity's pgo when used in an input directory.

Quick comparison with pgo

- Unlike

pgo,qq godoes not have a dual function.

pgocan either open a new shell on the job's main node or navigate to the job's input directory depending on the arguments provided.qq go, on the other hand, always opens a new shell in the job's working directory (on the job's main node, if available).- If you want to navigate to the input directory instead, use

qq cd.- If you use

qq gowith a job ID, a new shell in the job's working directory will be opened.qq goalways attempts to access the job's working directory if it exists, even if the job has failed or been killed — no--forceoption is required.

Description

Opens a new shell in the working directory of the specified qq job, or in the working directory of the job submitted from the current directory.

qq go [OPTIONS] JOB_ID

JOB_ID — One or more IDs of jobs whose working directories should be entered. Optional.

If no JOB_ID is specified, qq go searches for qq jobs in the current directory. If multiple suitable jobs are provided or found, qq go opens a shell for each job in turn.

Examples

qq go 123456

Opens a new shell in the working directory of the job with ID 123456 on its main working node. If you use just the numerical portion of the job ID, the job is assumed to be located on the default batch server. If the job is located on a different batch server, you need to use the full ID including the server address.

If the job does not exist, is not a qq job, its info file is missing, or the working directory no longer exists, the command exits with an error. If the job is not yet running, the command waits until the working directory is ready.

qq go 123456 144844 156432

For each of the specified jobs (123456, 144844, 156432), qq go opens a new shell in its working directory.

qq go

Opens a new shell in the working directory of the job whose info file is present in the current directory. If multiple suitable jobs are found, qq go opens a shell for each job in turn.

Notes

- Uses

cdfor local directories orsshfor remote hosts. - Does not change the working directory of the current shell; it always opens a new shell at the destination.

qq info

The qq info command is used to monitor a qq job's state and display information about it. It is qq's equivalent of Infinity's pinfo.

Quick comparison with pinfo

- You can use

qq infowith a job ID to obtain information about a qq job without having to navigate to its input directory.- Unlike

pinfo,qq infofocuses only on the most important details about a job.

The output is intentionally compact and easier to read.

Description

Displays information about the state and properties of the specified qq job(s), or of qq jobs found in the current directory.

qq info [OPTIONS] JOB_ID

JOB_ID — One or more IDs of jobs to display information for. Optional.

If no JOB_ID is provided, qq info searches for qq jobs in the current directory. If multiple jobs are provided or found, qq info prints information for each job in turn.

Options

-s, --short — Display only the job ID and the current state of the job.

Examples

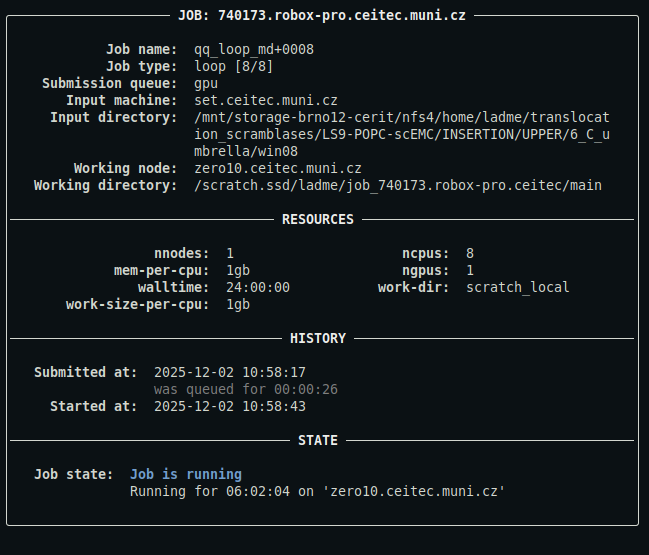

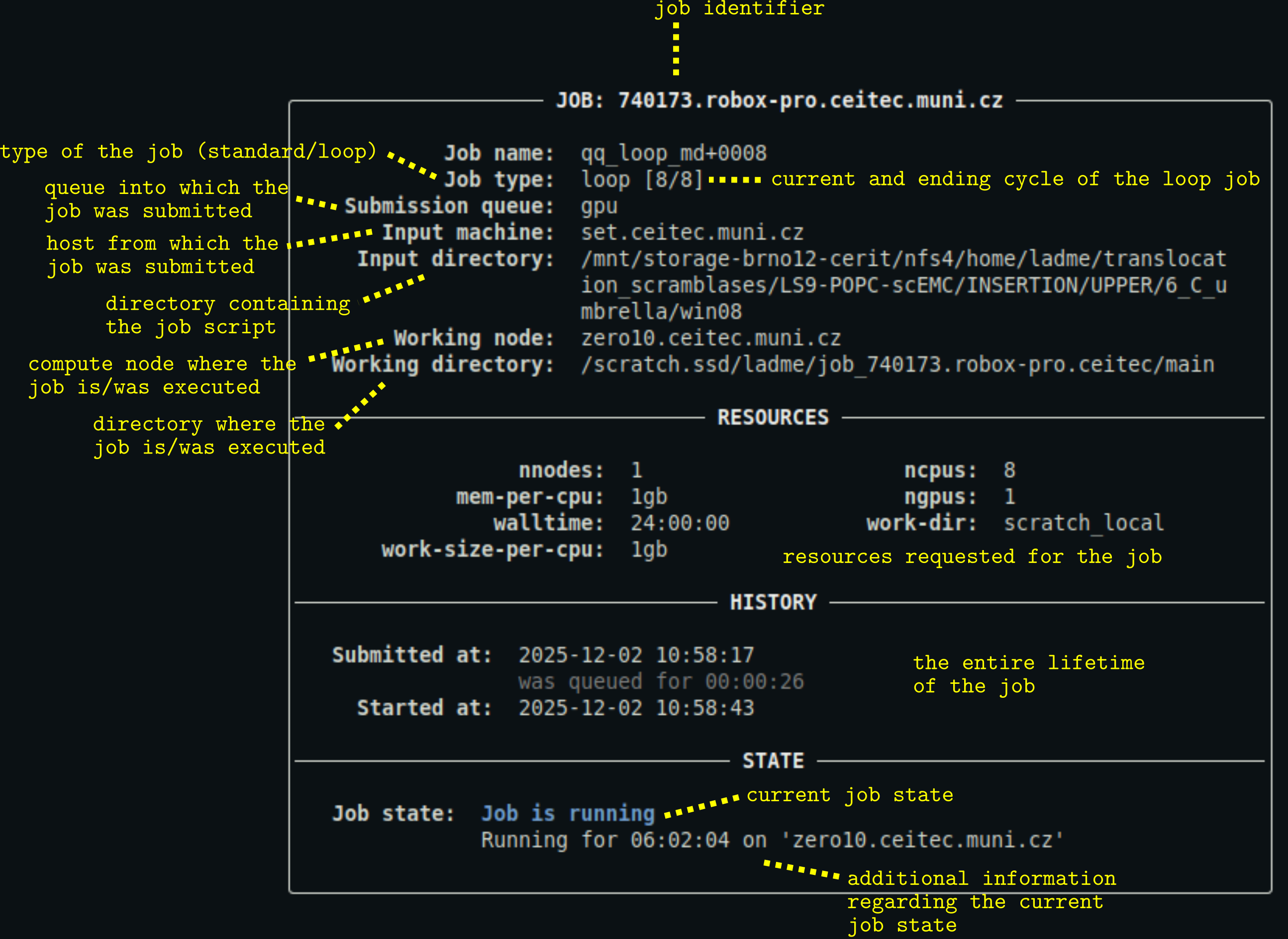

qq info 740173

Displays the full information panel for the job with ID 740173 located on the default batch server. If the job is located on a different batch server, you need to use the full ID including the server address.

This command only works if the job is a qq job with a valid and accessible info file, and the target batch server is reachable from the current machine.

This is what the output might look like:

For a detailed description of the output, see below.

qq info 740173 741234 741236

Displays full information panels for jobs 740173, 741234, and 741236.

qq info

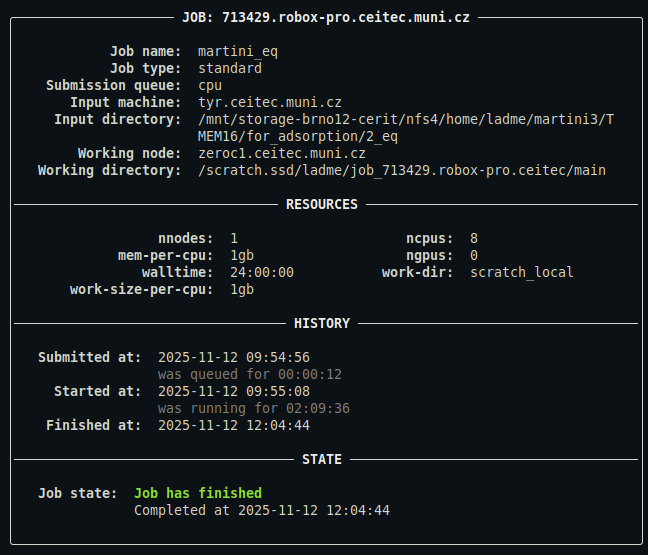

Displays the full information panel for all jobs whose info files are present in the current directory.

This is what the output might look like:

For a detailed description of the output, see below.

qq info -s

Displays short information for all jobs whose info files are present in the current directory. Only the jobs' full IDs and their current states are shown.

Description of the output

- You can customize the appearance of the output using a configuration file.

qq jobs

The qq jobs command is used to display information about a user's jobs. It is qq's equivalent of Infinity's pjobs.

Quick comparison with pjobs

- Unlike

pjobs,qq jobsalways shows the nodes that the job is running on, if any are assigned.- Unlike

pjobs,qq jobsdistinguishes between failed/killed and successfully finished jobs in its output.

Description

Displays a summary of your jobs or the jobs of a specified user. By default, only unfinished jobs are shown.

qq jobs [OPTIONS]

Options

-u, --user TEXT — Username whose jobs should be displayed. Defaults to your own username.

-e, --extra — Include extra information about the jobs.

-a, --all — Include both uncompleted and completed jobs in the summary.

-s TEXT, --server TEXT — Show jobs for a specific batch server. If not specified, jobs on the default batch server are shown.

--yaml — Output job metadata in YAML format.

Examples

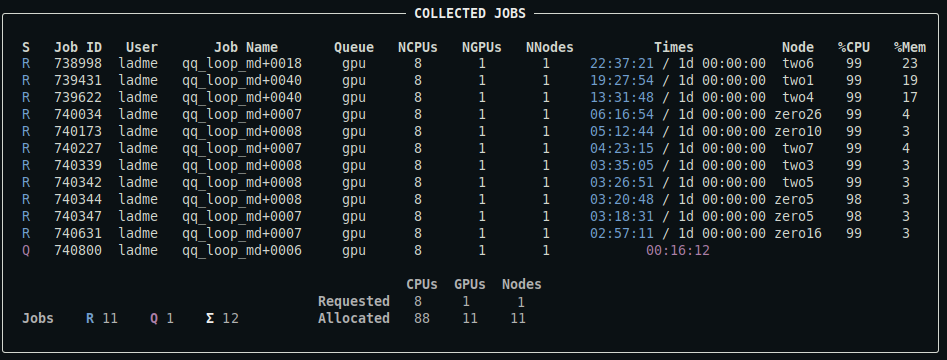

qq jobs

Displays a summary of your uncompleted jobs (queued, running, or exiting). This includes both qq jobs and any other jobs associated with the default batch server.

This is what the output might look like:

For a detailed description of the output, see below.

qq jobs -u user2

Displays a summary of user2's uncompleted jobs.

qq jobs -e

Includes extra information about your jobs in the output: the input machine (if available), the input directory, and the job comment (if available).

qq jobs --all

Displays a summary of all your jobs associated with the default batch server, both uncompleted and completed. Note that the batch system eventually removes records of completed jobs, so they may disappear from the output over time. This is what the output might look like:

For a detailed description of the output, see below.

qq jobs --server sokar

Displays a summary of all your uncompleted jobs associated with the sokar batch server that are available to you. sokar is a known shortcut for the full batch server name sokar-pbs.ncbr.muni.cz. You can use either of them. For more information about accessing information from other clusters, read this section of the manual.

qq jobs --yaml

Prints a summary of your uncompleted jobs in YAML format. This output contains all available metadata as provided by the batch system.

Notes

- This command lists all types of jobs, including those submitted using

qq submitand jobs created through other tools. - The run times and job states may not exactly match the output of

qq info, sinceqq jobsrelies solely on batch system data and does not use qq info files.

Description of the output

- The output of

qq statis the same, except that it displays the jobs of all users. - You can control which columns are displayed and customize the appearance of the output using a configuration file.

- Note that the

%CPUand%Memcolumns are not available on systems using Slurm (Karolina, LUMI).

qq kill

The qq kill command is used to terminate qq jobs. It is qq's equivalent of Infinity's pkill.

Quick comparison with pkill

- You can use

qq killwith a job ID to terminate a job without having to navigate to its input directory.- When prompted to confirm that you want to terminate a job,

qq killonly requires pressing a single key (yto confirm or any other key to cancel), instead of typing 'yes' and pressing Enter.qq kill --forcewill attempt to terminate jobs even if qq considers them finished, failed, or already killed. This is useful for removing stuck or lingering jobs from the batch system.

Description

Terminates the specified qq job(s), or all qq jobs submitted from the current directory.

qq kill [OPTIONS] JOB_ID

JOB_ID — One or more IDs of jobs to terminate. Optional.

If no JOB_ID is provided, qq kill searches for qq jobs in the current directory. If multiple suitable jobs are provided or found, qq kill terminates each one in turn.

By default, qq kill prompts for confirmation before terminating each job.

Without the --force flag, it will only attempt to terminate jobs that are queued, held, booting, or running — not jobs that are already finished or killed. When the --force flag is used, qq kill attempts to terminate any job regardless of its state, including jobs that qq believes are already finished or killed. This can be used to remove lingering or stuck jobs.

Options

-y, --yes — Terminate the job without asking for confirmation.

--force — Forcefully terminate the job, ignoring its current state and skipping confirmation.

Examples

qq kill 123456

Terminates the job with ID 123456 located on the default batch server. If the job is located on a different batch server, you need to use the full ID including the sever address.

Upon running this command, you will be prompted to confirm the termination by pressing y. This command only works if the specified job is a qq job with a valid and accessible info file, and the target batch server is reachable from the current machine.

qq kill 123456 144844 156432

Terminates jobs 123456, 144844, and 156432. You will be asked to confirm each termination individually.

qq kill

Terminates all suitable qq jobs whose info files are present in the current directory. You will be asked to confirm each termination individually.

qq kill 123456 -y

Terminates the job with ID 123456 without asking for confirmation (assumes 'yes').

qq kill 123456 --force

Forcefully terminates the job with ID 123456. This kills the job immediately and without confirmation, regardless of qq's recorded job state.

qq killall

The qq killall command is used to terminate all of your qq jobs. It is qq's equivalent of Infinity's pkillall.

Quick comparison with pkillall

qq killallcan only terminate jobs submitted usingqq submit; other jobs are not affected.

Description

Terminates all qq jobs submitted by the current user.

qq killall [OPTIONS]

This command only terminates qq jobs — other jobs in the batch system are not affected.

By default, qq killall prompts for confirmation before terminating the jobs.

Options

-y, --yes — Terminate all jobs without confirmation.

--force — Forcefully terminate all jobs, ignoring their current states and skipping confirmation.

-s TEXT, --server TEXT — Termine all your jobs on the specified batch server. If not specified, the current server is used.

Examples

qq killall

Terminates all your qq jobs with valid and accessible info files. You will be prompted to confirm termination by pressing y.

qq killall -y

Terminates all your qq jobs with valid and accessible info files without asking for confirmation (assumes "yes").

qq killall --force

Forcefully terminates all your qq jobs with valid and accessible info files. No confirmation is requested, and the jobs will be terminated even if qq believes they are already finished, failed, or killed.

qq killall --server sokar

Terminate all your qq jobs with valid and accessible info files associated with the sokar batch server. You will be prompted to confirm termination by pressing y.

qq nodes

The qq nodes command displays the compute nodes available on the current batch server. It is qq's equivalent of Infinity's pnodes.

Quick comparison with pnodes

- The output of

qq nodesis more dynamically formatted than that ofpnodes. If an entire group of nodes lacks a specific attribute (e.g., no GPUs, no shared scratch storage), the corresponding column is hidden.- Node group assignments are always determined heuristically based on node names. A full match of the alphabetic part of the name is required for nodes to belong to the same group (unlike

pnodes, which uses partial matches).

Description

Displays information about the nodes managed by the batch system. By default, only nodes that are available to you are shown.

qq nodes [OPTIONS]

Nodes are grouped heuristically into node groups based on their names.

Options

-a, --all — Display all nodes, including those that are down, inaccessible, or reserved.

-s TEXT, --server TEXT — Show nodes for a specific batch server. If not specified, nodes for the default batch server are shown.

--yaml — Output node metadata in YAML format.

Examples

qq nodes

Displays a summary of all nodes associated with the default batch server that are available to you.

This is what the output might look like (truncated):

Output truncated. For a detailed description of the output, see below.

qq nodes --all

Displays a summary of all nodes associated with the default batch server, including those that are down, inaccessible, or reserved.

qq nodes --server sokar

Displays a summary of all nodes associated with the sokar batch server that are available to you. sokar is a known shortcut for the full batch server name sokar-pbs.ncbr.muni.cz. You can use either of them. For more information about accessing information from other clusters, read this section of the manual.

qq nodes --yaml

Prints a summary of all available nodes associated with the default batch server in YAML format. This output contains the full metadata provided by the batch system.

Notes

- The availability state of nodes is not always perfectly reliable. Occasionally, nodes that are actually unavailable may still be reported as available.

Description of the output

- You can customize the appearance of the output using a configuration file.

- Columns for resources that are not relevant to a given node group (e.g., when no node in the group has GPUs) are hidden.

- For some node groups, there may also be a

Scratch Sharedcolumn specifying the amount of scratch space available to be shared among the nodes.

qq queues

The qq queues command displays the queues available on the current batch server. It is qq's equivalent of Infinity's pqueues.

Quick comparison with pqueues

qq queuesis generally more accurate at identifying available and unavailable queues thanpqueues.- The only other notable difference is the output format.

Description

Displays information about the queues available on the current batch server. By default, only queues that are available to you are shown.

qq queues [OPTIONS]

Options

-a, --all — Display all queues, including those that are not available to you.

-s TEXT, --server TEXT — Show queues for a specific batch server. If not specified, queues for the default batch server are shown.

--yaml — Output queue metadata in YAML format.

Examples

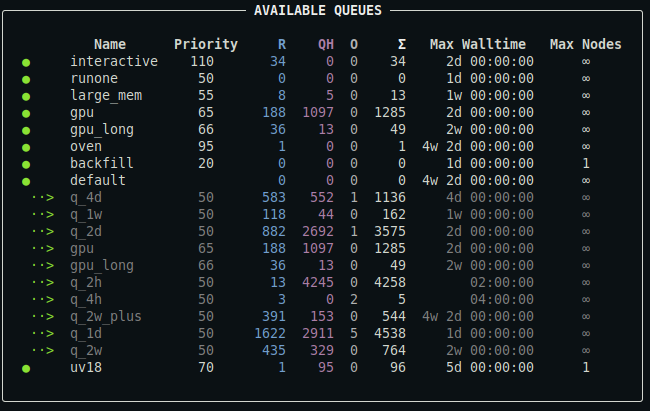

qq queues

Displays a summary of all queues associated with the default batch server to which you can submit jobs.

This is what the output might look like:

For a detailed description of the output, see below.

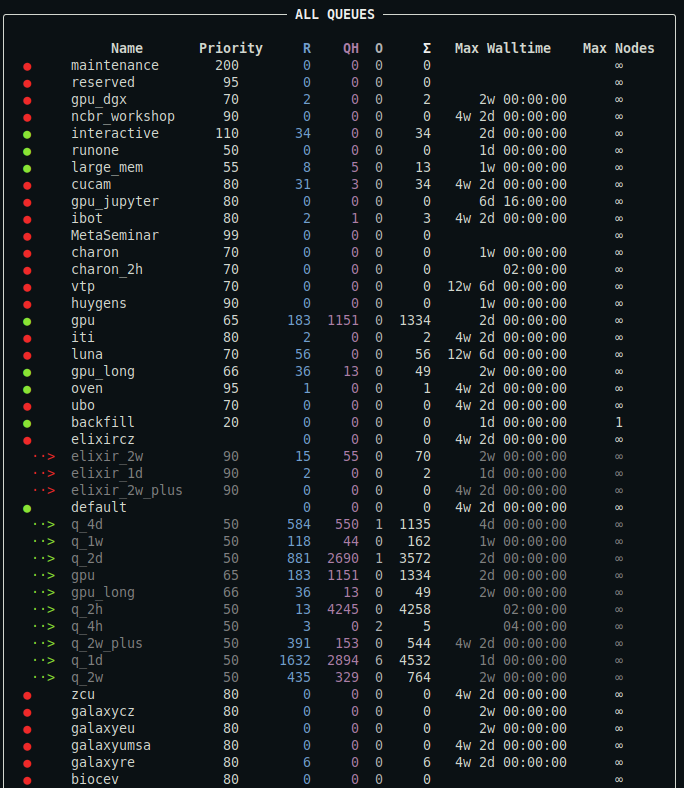

qq queues --all

Displays a summary of all queues associated with the default batch server, including those you cannot submit jobs to.

This is what the output might look like:

Output truncated. For a detailed description of the output, see below.

qq queues --server metacentrum

Displays a summary of all queues associated with the metacentrum batch server that are available to you. metacentrum is a known shortcut for the full batch server name pbs-m1.metacentrum.cz. You can use either of them. For more information about accessing information from other clusters, read this section of the manual.

qq queues --yaml

Prints a summary of all available queues in YAML format. This output contains the full metadata provided by the batch system.

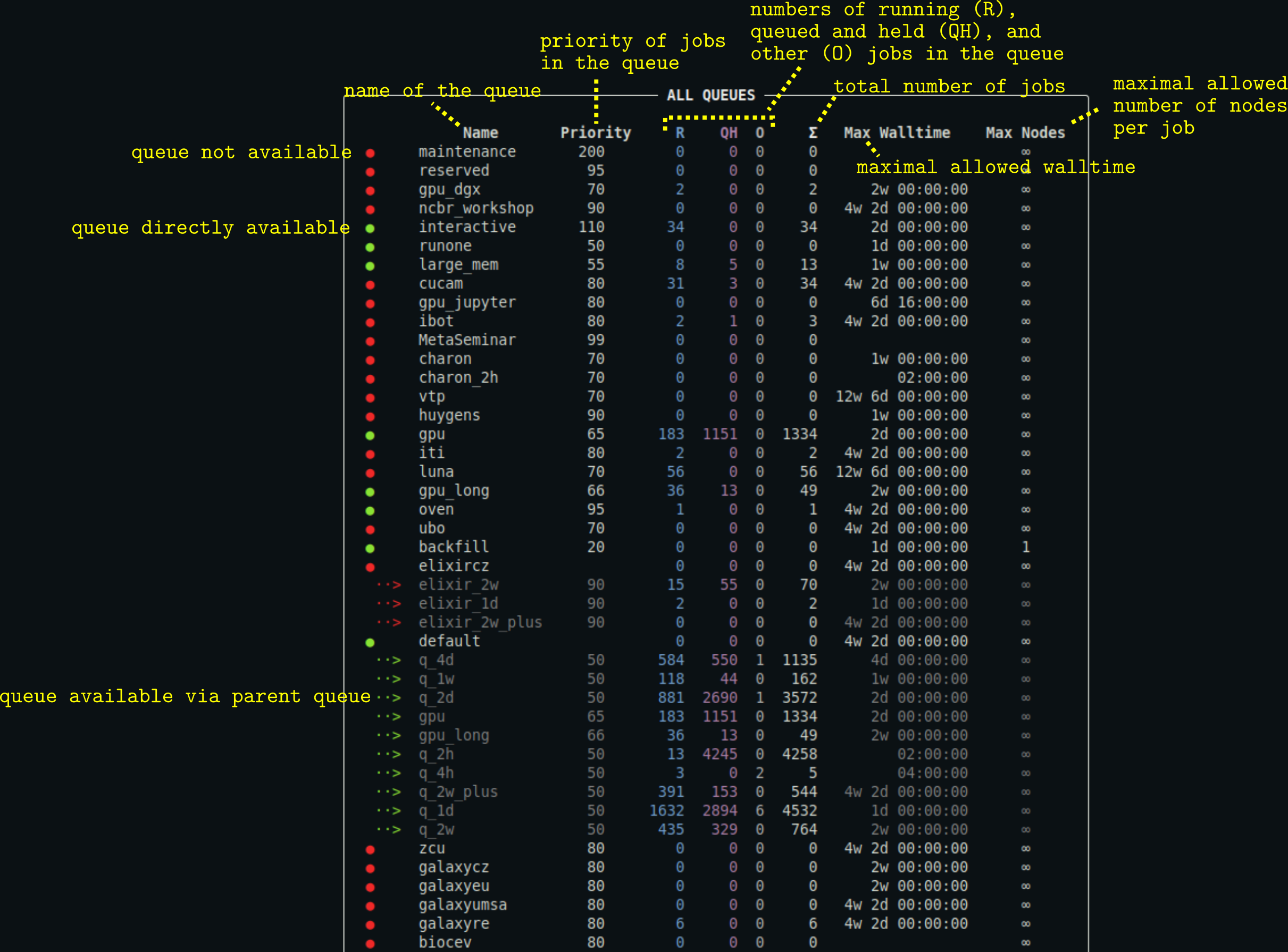

Description of the output

- You can customize the appearance of the output using a configuration file.

- The output may also contain the column

Commentproviding the comment associated with the queue (typically additional information about the queue). Max Nodescolumn is hidden if no queue defines a maximal allowed number of requested nodes per job.

qq respawn

The qq respawn command is used to "respawn" jobs, i.e. put failed or killed jobs back into the queue to be retried. It has no direct equivalent in Infinity.

Imagine you find that your job has failed because it unexpectedly reached a walltime limit (e.g., because it was running on a slow node). You want to just put the job back into the queue to be retried. Normally, you would run something like the following sequence of commands:

# go to the directory with the crashed job qq cd <crashed-job-id> # remove the working directory (optional) qq wipe # clear the runtime files from the crashed job qq clear # submit the job to the queue with the same parameters qq submit -q <queue> --ncpus 8 --ngpus 1 --walltime 1d <script-name>With

qq respawn, you can just run:# remove the working directory, clear runtime files, # and submit a new job with the original parameters qq respawn <crashed-job-id>

Description

Respawns the specified qq job(s), or all qq jobs submitted from the current directory.

qq respawn [OPTIONS] JOB_ID

JOB_ID — One or more IDs of jobs to respawn. Optional.

If no JOB_ID is provided, qq respawn searches for qq jobs in the current directory. If multiple suitable jobs are found, qq respawn respawns each one in turn.

Examples

qq respawn 123456

Respawns the job with ID 123456 located on the default batch server. If the job is located on a different batch server, you need to use the full ID including the sever address.

Only failed and killed jobs can be respawned. If you try to respawn a job in any other state, you will get an error.

qq respawn 123456 144844 156432

Respawns jobs 123456, 144844, and 156432, if they are suitable.

qq respawn

Respawns all suitable qq jobs whose info files are present in the current directory (typically one, since qq requires one job per directory).

qq shebang

The qq shebang command is a utility for converting regular scripts into qq-compatible scripts. It has no direct equivalent in Infinity.

Description

Adds the qq run shebang to a script, or replaces an existing one. If no script is specified, it simply prints the qq run shebang to standard output.

qq shebang [OPTIONS] SCRIPT

SCRIPT — Path to the script to modify. This argument is optional.

Examples

Suppose we have a script named run_script.sh with the following content:

#!/bin/bash

# activate the Gromacs module

metamodule add gromacs/2024.3-cuda

# prepare a TPR file

gmx_mpi grompp -f md.mdp -c eq.gro -t eq.cpt -n index.ndx -p system.top -o md.tpr

# run the simulation using 8 OpenMP threads

gmx_mpi mdrun -deffnm md -ntomp 8 -v

This script cannot be submitted using qq submit because it lacks the qq run shebang.

By running:

qq shebang run_script.sh

the existing bash shebang is replaced with the qq run shebang, resulting in:

#!/usr/bin/env -S qq run

# activate the Gromacs module

metamodule add gromacs/2024.3-cuda

# prepare a TPR file

gmx_mpi grompp -f md.mdp -c eq.gro -t eq.cpt -n index.ndx -p system.top -o md.tpr

# run the simulation using 8 OpenMP threads

gmx_mpi mdrun -deffnm md -ntomp 8 -v

If you run qq shebang without specifying a script (you use just qq shebang), it simply prints the qq shebang to standard output:

#!/usr/bin/env -S qq run

qq run

The qq run command represents the execution environment in which a qq job runs. It is qq's equivalent of Infinity's infex script and the infinity-env.

You should not invoke qq run directly. Instead, every script submitted with qq submit must include the following shebang line:

#!/usr/bin/env -S qq run

For more details about what qq run does, see the sections on standard jobs and loop jobs.

Quick comparison with infex and infinity-env

- Like

infinity-env, using theqq runshebang prevents you from accidentally running the script directly.- Unlike Infinity, all qq jobs must use this execution environment — no separate helper run script is created when submitting a qq job.

qq runalso takes over the responsibilities ofparchiveandpresubmit, which have no direct equivalents in qq.

qq stat

The qq stat command displays information about jobs from all users. It is qq's equivalent of Infinity's pqstat.

Quick comparison with pqstat

- The same differences that apply between

qq jobsandpjobsalso apply here.

Description

Displays a summary of jobs from all users. By default, only uncompleted jobs are shown.

qq stat [OPTIONS]

Options

-e, --extra — Include extra information about the jobs.

-a, --all — Include both uncompleted and completed jobs in the summary.

-s TEXT, --server TEXT — Show jobs for a specific batch server. If not specified, jobs on the default batch server are shown.

--yaml — Output job metadata in YAML format.

Examples

qq stat

Displays a summary of all uncompleted (queued, running, or exiting) jobs associated with the default batch server. The display looks similar to the display of qq jobs.

qq stat -e

Includes extra information about the jobs in the output: the input machine (if available), the input directory, and the job comment (if available).

qq stat --all

Displays a summary of all jobs associated with the default batch server, both uncompleted and completed. Note that the batch system eventually removes records of completed jobs, so they may disappear from the output over time.

qq stat --server sokar

Displays a summary of all uncompleted jobs associated with the sokar batch server that are available to you. sokar is a known shortcut for the full batch server name sokar-pbs.ncbr.muni.cz. You can use either of them. For more information about accessing information from other clusters, read this section of the manual.

qq stat --yaml

Prints a summary of all unfinished jobs in YAML format. This output contains all metadata provided by the batch system.

Notes

- This command lists all types of jobs, including those submitted using

qq submitand jobs created through other tools. - The run times and job states may not exactly match the output of

qq info, sinceqq statrelies solely on batch system data and does not use qq info files.

qq submit

The qq submit command is used to submit qq jobs to the batch system. It is qq's equivalent of Infinity's psubmit.

Quick comparison with psubmit

qq submitdoes not ask for confirmation; it behaves likepsubmit (...) -y.Options and parameters are specified differently. The only positional argument is the script name — everything else is an option. You can see all supported options using

qq submit --help.Infinity:

psubmit cpu run_script ncpus=8,walltime=12h,props=cl_zero -yqq:

qq submit -q cpu run_script --ncpus 8 --walltime 12h --props cl_zeroOptions can also be specified directly in the submitted script, or as a mix of in-script and command-line definitions. Command-line options always take precedence.

Unlike with

psubmit, you do not have to executeqq submitdirectly from the directory with the submitted script. You can runqq submitfrom anywhere and provide the path to your script. The job's input directory will always be the submitted script's parent directory.

qq submithas better support for multi-node jobs thanpsubmitas it allows specifying resource requirements per requested node.

Description

Submits a qq job to the batch system.

qq submit [OPTIONS] SCRIPT

SCRIPT — Path to the script to submit.

The submitted script must contain the qq run shebang. You can add it to your script by running qq shebang SCRIPT.

When the job is successfully submitted, qq submit creates a .qqinfo file for tracking the job's state.

Options

General settings

-q, --queue TEXT — Name of the queue to submit the job to.

-s, --server TEXT — Name of the batch server to submit the job to. If not specified, the job is submitted to the default batch server. Only supported on Metacentrum-family clusters. Read more about specifying a server here.

--account TEXT — Account to use for the job. Required only in environments with accounting (e.g., IT4Innovations).

--job-type TEXT — Type of the job. Defaults to standard. Available types: 'standard', 'loop', 'continuous'. Read more about job types here.

--exclude TEXT — Colon-, comma-, or space-separated list of files or directories that should not be copied to the working directory. Paths must be relative to the input directory.

--include TEXT — Colon-, comma-, or space-separated list of files or directories to copy into the working directory in addition to the input directory contents. These files are not copied back after job completion. Paths must be absolute or relative to the input directory. Ignored if the input directory is used as the working directory.

--depend TEXT — Comma- or space-separated list of job dependencies in the format '

--transfer-mode TEXT — Colon-, comma-, or space-separated list of transfer modes controlling when working directory files are transferred to the input directory. Modes: success (exit code 0), failure (non-zero exit code), always, never, or a specific exit code number (e.g., 42). Combine modes; files transfer if any apply. Defaults to success. On transfer, the working directory is deleted; otherwise it is preserved. Killed jobs are never transferred automatically. Ignored if the input directory is used as the working directory. Examples: 'success', 'always', 'success:42', '1 2 3'. Read more about transfer modes here.

--interpreter TEXT — Executable name or absolute path of the interpreter used to run the submitted script, including options for the interpreter. The interpreter must be available on the computing node. Defaults to bash. Read more about specifying interpreters here.

--batch-system TEXT — Name of the batch system used to submit the job. If not specified, the value of the environment variable 'QQ_BATCH_SYSTEM' is used or the system is auto-detected.

Requested resources

Memory and storage sizes are specified as 'N

Job resources are described in more detail in the Job resources section.

--nnodes INTEGER — Number of nodes to allocate for the job.

--ncpus-per-node INTEGER — Number of CPU cores to allocate per node.

--ncpus INTEGER — Total number of CPU cores to allocate for the job. Overrides --ncpus-per-node.

--mem-per-cpu TEXT — Memory to allocate per CPU core.

--mem-per-node TEXT — Memory to allocate per node. Overrides --mem-per-cpu.

--mem TEXT — Total memory to allocate for the job. Overrides --mem-per-cpu and --mem-per-node.

--ngpus-per-node INTEGER — Number of GPUs to allocate per node.

--ngpus INTEGER — Total number of GPUs to allocate for the job. Overrides --ngpus-per-node.

--walltime TEXT — Maximum runtime for the job. Examples: '1d', '12h', '10m', '24:00:00'.

--work-dir, --workdir TEXT — Type of working directory to use for the job. Available types depend on the environment.

--work-size-per-cpu, --worksize-per-cpu TEXT — Storage to allocate per CPU core.

--work-size-per-node, --worksize-per-node TEXT — Storage to allocate per node. Overrides --work-size-per-cpu.

--work-size, --worksize TEXT — Total storage to allocate for the job. Overrides --work-size-per-cpu and --work-size-per-node.

--props TEXT — Colon-, comma-, or space-separated list of node properties required (e.g., cl_two) or prohibited (e.g., ^cl_two) to run the job.

Settings for continuous and loop jobs

Only used when job-type is continuous or loop.